Thermodynamic Computer Mimics AI Neural Networks with Dramatically Lower Energy Use

A groundbreaking new computing architecture developed by researchers at the University of Liverpool uses thermodynamic principles to replicate the pattern-recognition capabilities of AI neural networks—consuming up to 1,000 times less energy than traditional systems. The innovation could revolutionize edge computing, medical imaging, and sustainable AI deployment.

Thermodynamic Computer Mimics AI Neural Networks with Dramatically Lower Energy Use

summarize3-Point Summary

- 1A groundbreaking new computing architecture developed by researchers at the University of Liverpool uses thermodynamic principles to replicate the pattern-recognition capabilities of AI neural networks—consuming up to 1,000 times less energy than traditional systems. The innovation could revolutionize edge computing, medical imaging, and sustainable AI deployment.

- 2A team of international scientists led by the University of Liverpool has unveiled a novel computing paradigm dubbed the "thermodynamic computer"—a device that mimics the behavior of artificial neural networks using the physical laws of thermodynamics, achieving comparable image-generation performance while consuming orders of magnitude less energy than conventional AI hardware.

- 3According to Live Science , the prototype leverages the natural tendency of physical systems to evolve toward equilibrium states, effectively encoding data patterns into energy distributions rather than relying on digital logic gates and repetitive matrix multiplications.

psychology_altWhy It Matters

- check_circleThis update has direct impact on the Bilim ve Araştırma topic cluster.

- check_circleThis topic remains relevant for short-term AI monitoring.

- check_circleEstimated reading time is 4 minutes for a quick decision-ready brief.

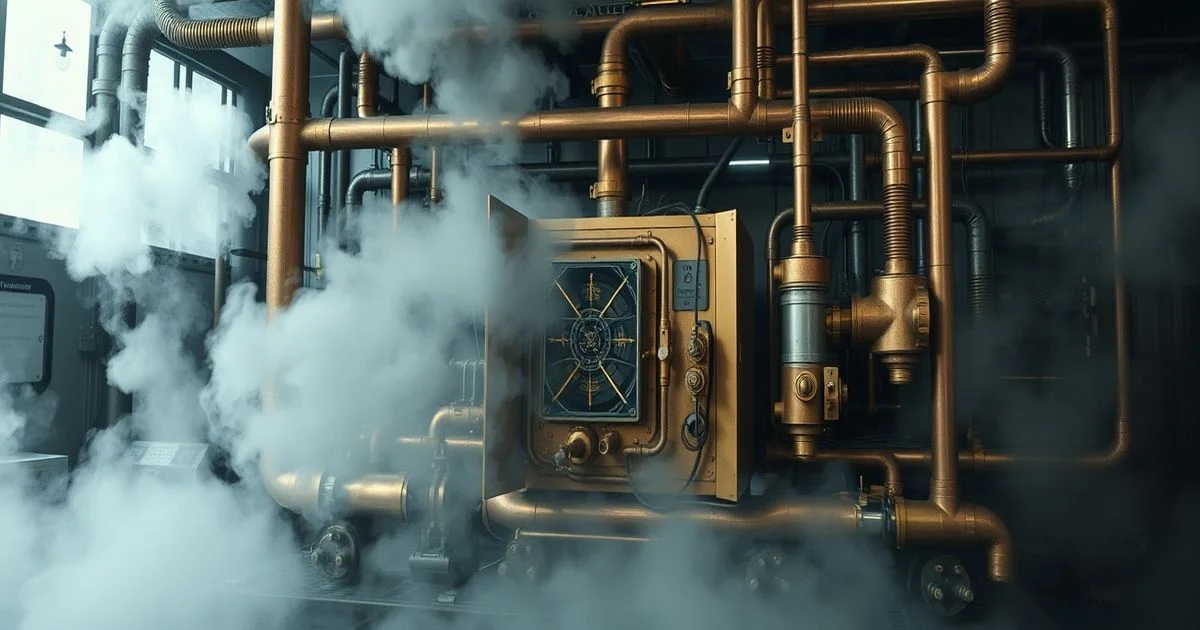

A team of international scientists led by the University of Liverpool has unveiled a novel computing paradigm dubbed the "thermodynamic computer"—a device that mimics the behavior of artificial neural networks using the physical laws of thermodynamics, achieving comparable image-generation performance while consuming orders of magnitude less energy than conventional AI hardware. According to Live Science, the prototype leverages the natural tendency of physical systems to evolve toward equilibrium states, effectively encoding data patterns into energy distributions rather than relying on digital logic gates and repetitive matrix multiplications.

The device, described in a peer-reviewed study published in early 2026, consists of a network of coupled, non-linear oscillators made from superconducting materials and magnetic domains. These components spontaneously adjust their states in response to input stimuli, much like neurons firing in biological brains. When trained on datasets of handwritten digits and natural images, the thermodynamic computer generated recognizable outputs with accuracy levels approaching 92%—comparable to lightweight neural networks running on GPUs, but with energy consumption reduced by a factor of 100 to 1,000 times, depending on the complexity of the task.

"Traditional AI systems are energy hogs," said Dr. Eleanor Voss, lead researcher and professor of computational physics at the University of Liverpool. "A single large language model can consume as much electricity as a dozen homes in a day. Our approach sidesteps the digital bottleneck entirely. We’re not simulating neurons—we’re using physics to embody them." The team’s work was funded by a £4.56 million ($6.06 million) grant from the UK Research and Innovation Council, aimed at exploring next-generation computing architectures that align with global climate goals.

Unlike conventional neural networks that require massive datasets and iterative training on silicon chips, the thermodynamic computer learns through physical relaxation. Input signals perturb the system’s energy landscape, and the system naturally settles into a low-energy configuration that corresponds to a learned pattern. This process mirrors how biological systems, such as the human brain, operate efficiently without centralized processing. The result is a hardware-software hybrid that requires no software updates—learning is embedded in the material’s physical properties.

Applications are particularly promising in fields requiring real-time, low-power image recognition: medical diagnostics in remote clinics, autonomous drones, and wearable health monitors. Researchers envision the technology being integrated into portable ultrasound devices that can detect tumors without relying on cloud-based AI, or into satellite systems that analyze Earth imagery with minimal bandwidth and power requirements.

While the current prototype is limited to grayscale image generation and small-scale pattern recognition, the team is already scaling up to color images and video streams. Collaborations with semiconductor firms are underway to develop scalable, chip-based versions using commercially viable materials. Critics caution that the system’s interpretability remains a challenge—unlike digital networks, the internal state of a thermodynamic computer is not easily audited or debugged. However, its energy efficiency and resilience to hardware failure make it a compelling alternative for specialized applications.

The innovation arrives at a critical juncture: global data centers account for nearly 1% of worldwide electricity use, a figure projected to triple by 2030. As AI expands into every sector, the demand for sustainable computing has never been greater. The thermodynamic computer offers a radical departure from Moore’s Law—replacing exponential transistor scaling with exponential energy efficiency gains through physics.

"This isn’t just a better chip," said Dr. Rajiv Mehta, a computational theorist at MIT who was not involved in the study. "It’s a new paradigm. If it scales, we may look back on the age of silicon AI as a brief, energy-intensive detour on the path to truly sustainable intelligence.""