Portable AI Workstation Breaks Limits: 120B Model Runs on Compact Rig

An amateur hardware engineer has built a compact, high-performance portable workstation capable of running the 120B-parameter GPT-OSS model at 165 tokens per second—pushing the boundaries of edge AI inference. The build, featuring a Ryzen 9 9950X3D and RTX PRO 6000, defies conventional wisdom about power and portability in AI hardware.

Portable AI Workstation Breaks Limits: 120B Model Runs on Compact Rig

summarize3-Point Summary

- 1An amateur hardware engineer has built a compact, high-performance portable workstation capable of running the 120B-parameter GPT-OSS model at 165 tokens per second—pushing the boundaries of edge AI inference. The build, featuring a Ryzen 9 9950X3D and RTX PRO 6000, defies conventional wisdom about power and portability in AI hardware.

- 2In a groundbreaking demonstration of edge AI capabilities, an anonymous hardware enthusiast known online as neintailedfoxx has unveiled a custom-built portable workstation capable of running the massive GPT-OSS 120B parameter model at speeds exceeding 150 tokens per second—without requiring a data center or cloud infrastructure.

- 3The system, housed in a FormD T1 2.5 Gunmetal mini-ITX case, combines cutting-edge components with aggressive undervolting and thermal optimization to achieve unprecedented performance in a footprint smaller than a standard laptop.

psychology_altWhy It Matters

- check_circleThis update has direct impact on the Yapay Zeka Araçları ve Ürünler topic cluster.

- check_circleThis topic remains relevant for short-term AI monitoring.

- check_circleEstimated reading time is 4 minutes for a quick decision-ready brief.

In a groundbreaking demonstration of edge AI capabilities, an anonymous hardware enthusiast known online as neintailedfoxx has unveiled a custom-built portable workstation capable of running the massive GPT-OSS 120B parameter model at speeds exceeding 150 tokens per second—without requiring a data center or cloud infrastructure. The system, housed in a FormD T1 2.5 Gunmetal mini-ITX case, combines cutting-edge components with aggressive undervolting and thermal optimization to achieve unprecedented performance in a footprint smaller than a standard laptop.

The workstation’s heart is the AMD Ryzen 9 9950X3D, a 16-core processor renowned for its 3D V-Cache technology, which significantly boosts data access speeds critical for large language model inference. Paired with an NVIDIA RTX PRO 6000 workstation GPU—typically reserved for professional visualization and simulation tasks—the system leverages 96GB of DDR5-6800 RAM to handle the massive memory demands of context-heavy AI workloads. The user reports achieving 150–165 tokens per second using LM Studio on Windows, a performance metric that rivals some cloud-based API endpoints, but with full local control and zero latency.

Thermal management was a key challenge. To overcome the constraints of a compact chassis, the builder employed a 120mm fan sourced from AliExpress, originally designed for the NVIDIA RTX 4090 FE, repurposed to fit an 18mm profile while delivering airflow equivalent to a standard 25mm fan. This innovation, combined with a flipped GPU orientation and top-intake airflow configuration, ensures that both CPU and GPU remain under 80°C under sustained load. The CPU is undervolted using AMD’s Curve Optimizer (-25/-30 per CCD), while the GPU is pushed to 2700MHz at just 0.89V, with a 500W power limit to maintain stability. RAM is tuned to 6000MT/s CL28-36-35-30 with a 2233MHz FCLK, maximizing memory bandwidth.

Storage is equally robust, featuring a multi-drive array including a Crucial T710 4TB, Samsung 990 Pro 4TB, WD Black SN850X 8TB, and TEAMGROUP CX2 2TB—each selected for high sequential read speeds essential for fast model loading. The Corsair SF1000 PSU, paired with custom cables from Dreambigbyray, ensures clean power delivery in a compact form factor.

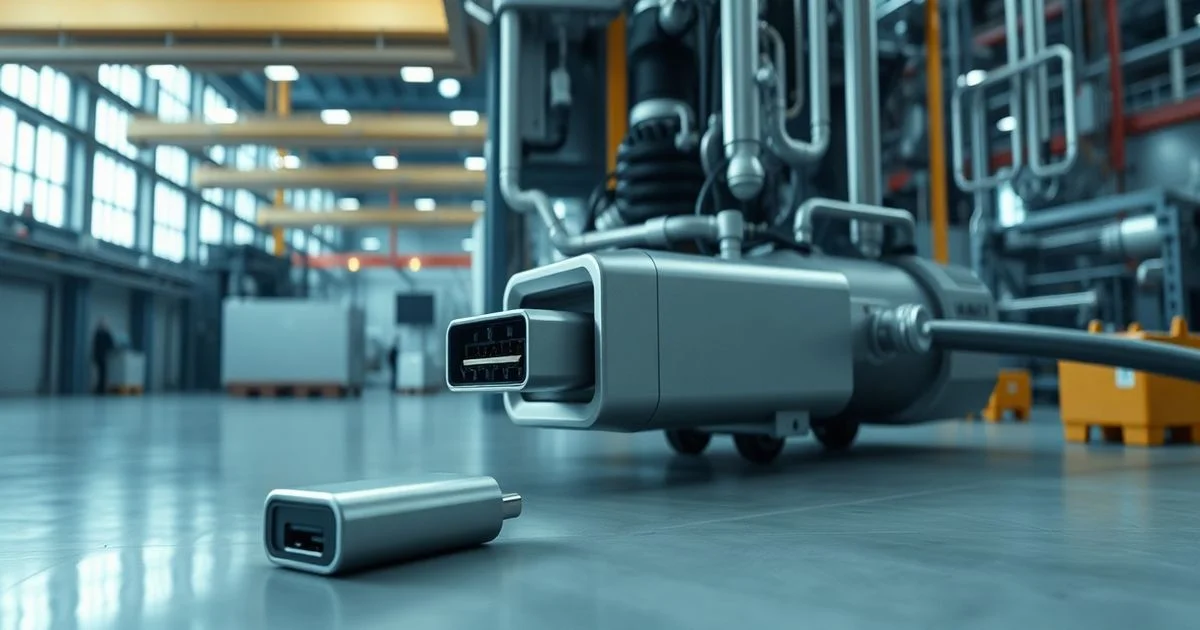

Notably, the builder expressed regret at not being able to use a Threadripper CPU for higher memory bandwidth, citing the lack of mini-ITX Threadripper motherboards as a critical industry gap. This highlights a broader limitation in the consumer hardware ecosystem: while AI workloads demand more bandwidth and memory, the market for compact, high-end workstation platforms remains underdeveloped.

While the build is not a commercial product, its success has sparked discussions in communities like r/LocalLLaMA and hardware forums about the feasibility of portable, high-performance AI rigs for researchers, developers, and even journalists needing on-the-ground AI analysis. According to PortableApps.com, the growing ecosystem of portable software tools—including AI inference frameworks—supports the trend toward decentralized, local computing. The platform, which hosts over 1,400 portable applications and has seen over 1.2 billion downloads, underscores a global shift toward software that runs independently of system installations—a philosophy mirrored in this hardware feat.

Though the system runs on Windows for now, the builder plans to test Linux for potential performance gains. If successful, this could set a new benchmark for edge AI, proving that powerful inference doesn’t require massive racks or cloud subscriptions. As AI models grow larger and more demanding, this build may serve as a blueprint for the next generation of mobile AI workstations—where power meets portability, and innovation thrives in the smallest of chassis.