Altman Defends AI Energy Use by Comparing It to Human Evolutionary Costs

OpenAI CEO Sam Altman has sparked debate by arguing that criticisms of AI's energy consumption overlook the far greater resource demands of raising and sustaining human life over millennia. In a provocative analogy, he contrasts the efficiency of modern AI systems with the evolutionary overhead of human survival — from childbirth to predator avoidance.

Altman Defends AI Energy Use by Comparing It to Human Evolutionary Costs

summarize3-Point Summary

- 1OpenAI CEO Sam Altman has sparked debate by arguing that criticisms of AI's energy consumption overlook the far greater resource demands of raising and sustaining human life over millennia. In a provocative analogy, he contrasts the efficiency of modern AI systems with the evolutionary overhead of human survival — from childbirth to predator avoidance.

- 2OpenAI CEO Sam Altman has ignited a new front in the ongoing debate over artificial intelligence’s environmental footprint, arguing that concerns about AI’s energy use are misplaced when compared to the staggering resource costs of human biological evolution and reproduction.

- 3Speaking at a closed-door technology symposium in San Francisco, Altman challenged critics to consider the full historical context of energy consumption — not just the kilowatt-hours of data centers, but the millennia of calories, land, water, and labor required to raise even a single human being.

psychology_altWhy It Matters

- check_circleThis update has direct impact on the Sektör ve İş Dünyası topic cluster.

- check_circleThis topic remains relevant for short-term AI monitoring.

- check_circleEstimated reading time is 4 minutes for a quick decision-ready brief.

OpenAI CEO Sam Altman has ignited a new front in the ongoing debate over artificial intelligence’s environmental footprint, arguing that concerns about AI’s energy use are misplaced when compared to the staggering resource costs of human biological evolution and reproduction. Speaking at a closed-door technology symposium in San Francisco, Altman challenged critics to consider the full historical context of energy consumption — not just the kilowatt-hours of data centers, but the millennia of calories, land, water, and labor required to raise even a single human being.

"You think AI is inefficient? Try raising 100 billion humans," Altman reportedly said, according to The Register. The comment, initially dismissed as hyperbole, has since circulated widely among technologists, ethicists, and climate scientists. Altman’s point was not to dismiss energy concerns, but to reframe them: while AI systems consume significant power, they do so with unparalleled efficiency relative to the output they generate — whether in scientific discovery, medical diagnostics, or climate modeling.

Altman’s analogy draws on evolutionary biology. Humans, over tens of thousands of years, have consumed vast quantities of energy not just for sustenance, but for survival: hunting, building shelters, defending against predators, and raising offspring over decades. A single human requires approximately 2,000 calories per day — roughly 8.4 million joules — just to maintain basic biological functions. Over a 75-year lifespan, that’s more than 225 gigajoules of energy. Multiply that by the 100 billion humans who have ever lived, and the total energy expenditure becomes astronomically larger than the entire global AI infrastructure today.

"We’ve spent hundreds of thousands of years optimizing for survival, not efficiency," Altman added. "AI, by contrast, is being optimized for efficiency from day one. We’re not replicating human biology — we’re building something fundamentally different, and far more scalable."

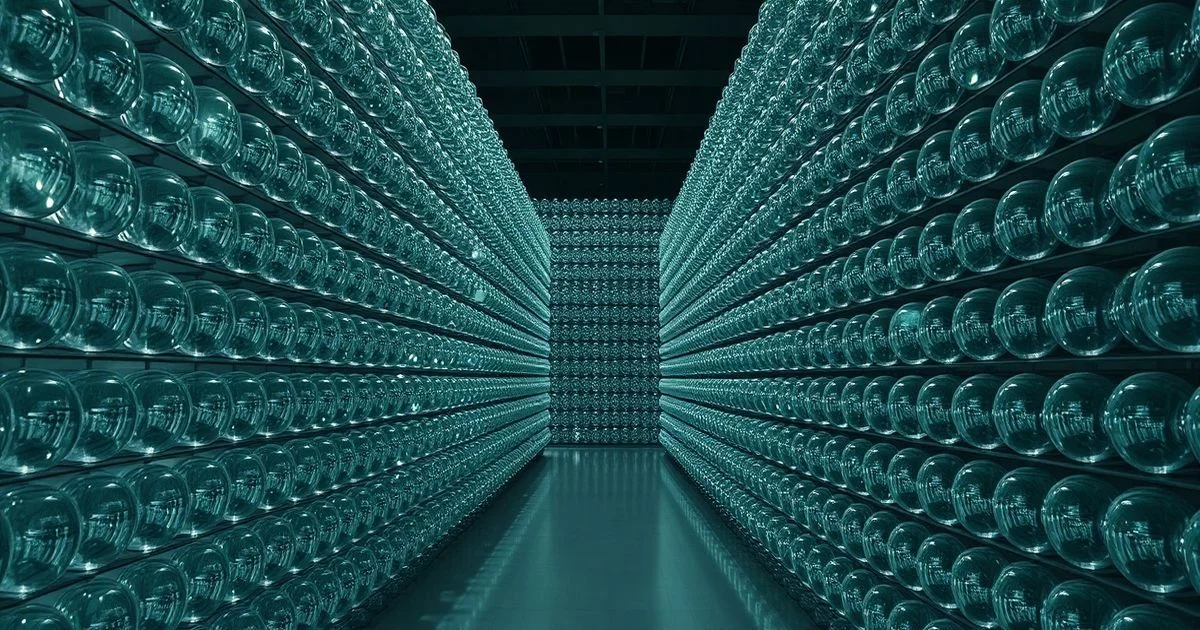

Industry analysts note that while global AI data centers consumed an estimated 460 terawatt-hours in 2025 — roughly equivalent to the annual electricity use of a medium-sized country like Argentina — this pales in comparison to the estimated 150,000 terawatt-hours consumed annually by global agriculture alone, much of it to feed and sustain the human population. Transportation, heating, and industrial manufacturing further dwarf AI’s footprint.

Environmental advocates, however, caution against using historical human energy use as a justification for unchecked AI expansion. "It’s a rhetorical sleight of hand," said Dr. Elena Ruiz, an environmental economist at Stanford. "We can’t justify future emissions by pointing to past inefficiencies. The goal should be to minimize harm going forward — not to normalize escalation under the guise of historical precedent."

Altman’s remarks also come amid growing regulatory scrutiny of AI’s environmental impact in the EU and U.S., with proposed legislation requiring transparency in energy consumption metrics for large models. OpenAI has responded by publishing detailed energy reports for its GPT-4o system, showing a 40% reduction in energy use per token since 2023 through architectural improvements and renewable-powered data centers.

While Altman’s comparison may seem flippant, it underscores a deeper truth: technological progress often looks wasteful until its scale and efficiency are contextualized. As Linux creator Linus Torvalds once quipped in a separate interview — though unrelated — about his potential successor, "The best systems aren’t the ones that use the least power, but the ones that do the most with what they have." In that spirit, Altman may be less defending AI’s energy use and more advocating for a broader, more historically informed perspective on what efficiency truly means.

As the world races toward AGI, the conversation must evolve beyond simple kWh metrics. The real question may not be how much energy AI uses — but whether it’s the most effective tool we have to solve the energy crises we’ve created ourselves.