Fine-Tuning SDXL with Childhood Photos Generates Emotionally Resonant AI Art

An experimental AI project fine-tunes SDXL using personal childhood photographs, producing visually haunting, audio-reactive imagery that blurs the line between memory and machine. The artist’s LORA model, paired with real-time audio visualization tools, has sparked new discourse on AI and emotional memory.

Fine-Tuning SDXL with Childhood Photos Generates Emotionally Resonant AI Art

summarize3-Point Summary

- 1An experimental AI project fine-tunes SDXL using personal childhood photographs, producing visually haunting, audio-reactive imagery that blurs the line between memory and machine. The artist’s LORA model, paired with real-time audio visualization tools, has sparked new discourse on AI and emotional memory.

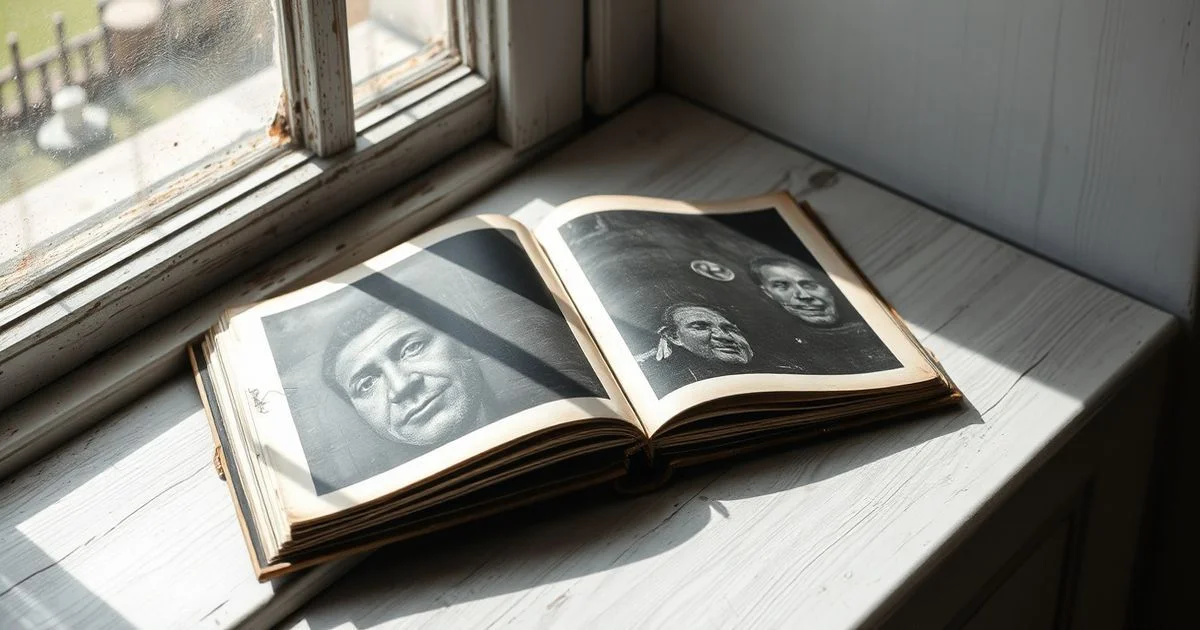

- 2Fine-Tuning SDXL with Childhood Photos Generates Emotionally Resonant AI Art In a groundbreaking fusion of personal nostalgia and cutting-edge artificial intelligence, an anonymous artist known online as Real-Philosopher-895 has fine-tuned the Stable Diffusion XL (SDXL) model using a curated dataset of 60 childhood photographs from their family album.

- 3The resulting AI-generated visuals, when synchronized with audio-reactive geometry systems, produce surreal, emotionally charged imagery that appears to evoke long-forgotten memories—not through data alone, but through an uncanny resonance with human affect.

psychology_altWhy It Matters

- check_circleThis update has direct impact on the Yapay Zeka Araçları ve Ürünler topic cluster.

- check_circleThis topic remains relevant for short-term AI monitoring.

- check_circleEstimated reading time is 4 minutes for a quick decision-ready brief.

Fine-Tuning SDXL with Childhood Photos Generates Emotionally Resonant AI Art

In a groundbreaking fusion of personal nostalgia and cutting-edge artificial intelligence, an anonymous artist known online as Real-Philosopher-895 has fine-tuned the Stable Diffusion XL (SDXL) model using a curated dataset of 60 childhood photographs from their family album. The resulting AI-generated visuals, when synchronized with audio-reactive geometry systems, produce surreal, emotionally charged imagery that appears to evoke long-forgotten memories—not through data alone, but through an uncanny resonance with human affect.

The project, first shared on Reddit’s r/StableDiffusion community, leverages a custom LORA (Low-Rank Adaptation) model trained exclusively on intimate, low-resolution images of the artist’s early life. Unlike conventional AI art training sets drawn from public datasets, this experiment used deeply personal, often imperfect snapshots: blurred birthday parties, faded school portraits, and candid family moments. The outcome, according to the artist, was not merely aesthetic but psychological: “It felt like my younger self was speaking through the algorithm,” they wrote in the original post.

When fed into Archaia’s audio-reactive geometry engine—a system that translates sound frequencies into evolving geometric forms—the LORA-generated images dynamically morph in real time, responding to ambient music with fluid, organic transitions. A second demonstration, using StreamDiffusion and an updated version of Auratura, further confirmed the model’s capacity for emotional expression. Viewers reported feelings of melancholy, warmth, and disorientation when watching the videos, suggesting the AI had internalized not just visual patterns, but emotional tonalities embedded in the source material.

This approach diverges sharply from typical AI art workflows, which prioritize technical precision over subjective meaning. According to Civitai, a leading platform for AI model sharing, LORA fine-tuning is increasingly used for “micro-editing” and style adaptation, particularly in ComfyUI workflows like the Z-Image Base Inpainting system. However, few practitioners have applied it to autobiographical datasets with such emotional intent. “Most LORAs are trained on aesthetics—styles, lighting, poses,” notes a Civitai community moderator. “This is the first time we’ve seen one trained on the weight of memory.”

While Merriam-Webster defines “fine-tune” as the process of making small, precise adjustments to optimize performance, the artist’s work transcends technical optimization. It suggests that AI, when trained on intimate human artifacts, can become a vessel for emotional archaeology. The algorithm does not merely replicate images—it reconstructs mood, texture of time, and the fragility of recollection.

Art historians and cognitive scientists are now taking notice. Dr. Elena Voss, a researcher at the Institute for Digital Humanities, commented: “This isn’t just AI art. It’s digital memorialization. The machine becomes a medium for re-experiencing the past—not as data, but as feeling.”

The artist has released all project files, tutorials, and training datasets on their YouTube channel and Patreon, encouraging others to experiment with personal archives. “We’re taught to think of AI as a tool for creation,” they said in a follow-up video. “But what if it’s also a tool for healing? For remembering what we’ve forgotten?”

As generative AI continues to evolve, this project offers a profound counter-narrative: that the most powerful models may not be those trained on millions of images—but those trained on the few that matter most to a single soul.