Ace-Step 1.5 Revolutionizes AI Music Generation with Unprecedented Speed and Intuition

Users are praising Ace-Step 1.5 as a breakthrough in AI-generated music, citing its ability to produce high-quality, emotionally resonant songs in under four minutes—even on outdated hardware. The model’s uncanny knack for turning lyrical errors into artistic happy accidents is reshaping how creators approach AI-assisted composition.

Ace-Step 1.5 Revolutionizes AI Music Generation with Unprecedented Speed and Intuition

summarize3-Point Summary

- 1Users are praising Ace-Step 1.5 as a breakthrough in AI-generated music, citing its ability to produce high-quality, emotionally resonant songs in under four minutes—even on outdated hardware. The model’s uncanny knack for turning lyrical errors into artistic happy accidents is reshaping how creators approach AI-assisted composition.

- 2In a quiet corner of the AI enthusiast community, a quiet revolution in music generation is unfolding.

- 3Ace-Step 1.5, a recently updated artificial intelligence model designed for audio synthesis, is drawing widespread acclaim from amateur and professional creators alike for its speed, intuitive interface, and surprising artistic sensitivity.

psychology_altWhy It Matters

- check_circleThis update has direct impact on the Yapay Zeka Araçları ve Ürünler topic cluster.

- check_circleThis topic remains relevant for short-term AI monitoring.

- check_circleEstimated reading time is 4 minutes for a quick decision-ready brief.

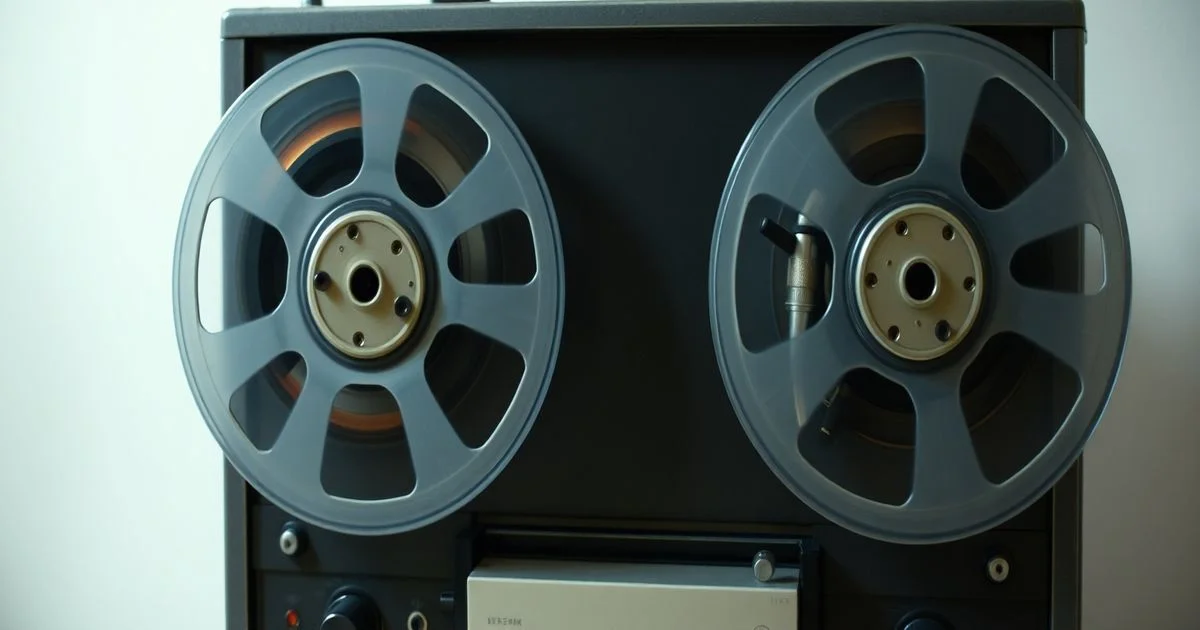

In a quiet corner of the AI enthusiast community, a quiet revolution in music generation is unfolding. Ace-Step 1.5, a recently updated artificial intelligence model designed for audio synthesis, is drawing widespread acclaim from amateur and professional creators alike for its speed, intuitive interface, and surprising artistic sensitivity. According to a detailed user testimonial posted on Reddit’s r/StableDiffusion, the model outperforms all other AI music tools the author has tested—despite running on an aging GPU.

The user, who goes by /u/ExistentialTenant, describes generating a three-minute song in just 200 seconds. What’s more remarkable is the throughput: within the time it takes to listen to one composition, the user can produce two to three additional tracks. This efficiency, once the domain of high-end studios and professional audio engineers, is now accessible to anyone with a modest computer. The implications for independent artists, content creators, and even educators are profound.

What sets Ace-Step 1.5 apart isn’t just its speed, but its uncanny ability to interpret vague creative prompts and transform them into emotionally coherent pieces. The user recounts crafting a song inspired by Celine Dion’s "Because You Loved Me," using only a handful of genre descriptors—pop ballad, orchestral swell, emotional crescendo—and lyrics co-written with Google’s Gemini AI. Adjusting duration and BPM required minimal input, yet the output was immediately compelling. "It hardly took any effort at all," the user wrote, "yet I loved every result."

Even more astonishing is the model’s handling of imperfections. When Ace-Step misinterpreted lyrical phrasing or generated nonsensical lines, the result wasn’t jarring—it was unexpectedly poetic. "It somehow still screwed up in a way that still sound great," the user observed. This phenomenon, which some researchers have termed "generative grace," suggests the model doesn’t merely replicate patterns but interprets emotional intent, compensating for technical flaws with stylistic intuition. Such behavior blurs the line between algorithmic output and human-like creativity.

Despite its breakthroughs, Ace-Step 1.5 is not without limitations. The user notes that inpainting—modifying specific sections of an existing composition—and generating clean, standalone instrumentals remain challenging. These are known pain points in generative audio models, where preserving structural coherence while altering content is notoriously difficult. Yet even these shortcomings are framed not as failures, but as areas of ongoing exploration. "I feel really fortunate to have access to something like Ace-Step," the user concluded, a sentiment echoed across several niche forums where early adopters are sharing their creations.

Industry analysts are taking notice. While no official company or development team has publicly claimed ownership of Ace-Step, its rapid evolution and community-driven refinement suggest an open-source or semi-open initiative gaining momentum. The model’s performance on low-end hardware further signals a democratizing trend: AI creativity is no longer the privilege of those with access to cloud GPUs or enterprise-grade systems. As tools like Ace-Step mature, they may redefine the role of the musician—not as a technician, but as a curator of machine-generated emotion.

For now, Ace-Step 1.5 stands as a testament to how far generative AI has come—not in raw computational power, but in its ability to understand, adapt, and elevate human intention. In an era saturated with AI tools that feel mechanical, Ace-Step feels alive. And that, perhaps, is the most revolutionary thing of all.